Clarified CQRS

Wednesday, December 9th, 2009.

After listening how the community has interpreted Command-Query Responsibility Segregation I think that the time has come for some clarification. Some have been tying it together to Event Sourcing. Most have been overlaying their previous layered architecture assumptions on it. Here I hope to identify CQRS itself, and describe in which places it can connect to other patterns.

Download as PDF – this is quite a long post.

Why CQRS

Before describing the details of CQRS we need to understand the two main driving forces behind it: collaboration and staleness.

Collaboration refers to circumstances under which multiple actors will be using/modifying the same set of data – whether or not the intention of the actors is actually to collaborate with each other. There are often rules which indicate which user can perform which kind of modification and modifications that may have been acceptable in one case may not be acceptable in others. We’ll give some examples shortly. Actors can be human like normal users, or automated like software.

Staleness refers to the fact that in a collaborative environment, once data has been shown to a user, that same data may have been changed by another actor – it is stale. Almost any system which makes use of a cache is serving stale data – often for performance reasons. What this means is that we cannot entirely trust our users decisions, as they could have been made based on out-of-date information.

Standard layered architectures don’t explicitly deal with either of these issues. While putting everything in the same database may be one step in the direction of handling collaboration, staleness is usually exacerbated in those architectures by the use of caches as a performance-improving afterthought.

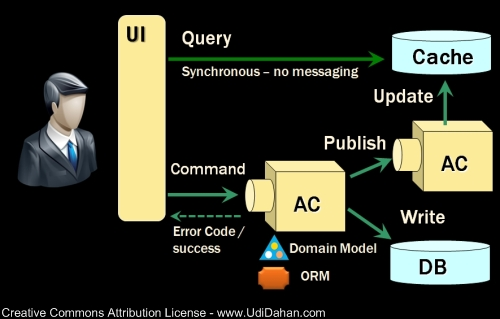

A picture for reference

I’ve given some talks about CQRS using this diagram to explain it:

The boxes named AC are Autonomous Components. We’ll describe what makes them autonomous when discussing commands. But before we go into the complicated parts, let’s start with queries:

Queries

If the data we’re going to be showing users is stale anyway, is it really necessary to go to the master database and get it from there? Why transform those 3rd normal form structures to domain objects if we just want data – not any rule-preserving behaviors? Why transform those domain objects to DTOs to transfer them across a wire, and who said that wire has to be exactly there? Why transform those DTOs to view model objects?

In short, it looks like we’re doing a heck of a lot of unnecessary work based on the assumption that reusing code that has already been written will be easier than just solving the problem at hand. Let’s try a different approach:

How about we create an additional data store whose data can be a bit out of sync with the master database – I mean, the data we’re showing the user is stale anyway, so why not reflect in the data store itself. We’ll come up with an approach later to keep this data store more or less in sync.

Now, what would be the correct structure for this data store? How about just like the view model? One table for each view. Then our client could simply SELECT * FROM MyViewTable (or possibly pass in an ID in a where clause), and bind the result to the screen. That would be just as simple as can be. You could wrap that up with a thin facade if you feel the need, or with stored procedures, or using AutoMapper which can simply map from a data reader to your view model class. The thing is that the view model structures are already wire-friendly, so you don’t need to transform them to anything else.

You could even consider taking that data store and putting it in your web tier. It’s just as secure as an in-memory cache in your web tier. Give your web servers SELECT only permissions on those tables and you should be fine.

Query Data Storage

While you can use a regular database as your query data store it isn’t the only option. Consider that the query schema is in essence identical to your view model. You don’t have any relationships between your various view model classes, so you shouldn’t need any relationships between the tables in the query data store.

So do you actually need a relational database?

The answer is no, but for all practical purposes and due to organizational inertia, it is probably your best choice (for now).

Scaling Queries

Since your queries are now being performed off of a separate data store than your master database, and there is no assumption that the data that’s being served is 100% up to date, you can easily add more instances of these stores without worrying that they don’t contain the exact same data. The same mechanism that updates one instance can be used for many instances, as we’ll see later.

This gives you cheap horizontal scaling for your queries. Also, since your not doing nearly as much transformation, the latency per query goes down as well. Simple code is fast code.

Data modifications

Since our users are making decisions based on stale data, we need to be more discerning about which things we let through. Here’s a scenario explaining why:

Let’s say we have a customer service representative who is one the phone with a customer. This user is looking at the customer’s details on the screen and wants to make them a ‘preferred’ customer, as well as modifying their address, changing their title from Ms to Mrs, changing their last name, and indicating that they’re now married. What the user doesn’t know is that after opening the screen, an event arrived from the billing department indicating that this same customer doesn’t pay their bills – they’re delinquent. At this point, our user submits their changes.

Should we accept their changes?

Well, we should accept some of them, but not the change to ‘preferred’, since the customer is delinquent. But writing those kinds of checks is a pain – we need to do a diff on the data, infer what the changes mean, which ones are related to each other (name change, title change) and which are separate, identify which data to check against – not just compared to the data the user retrieved, but compared to the current state in the database, and then reject or accept.

Unfortunately for our users, we tend to reject the whole thing if any part of it is off. At that point, our users have to refresh their screen to get the up-to-date data, and retype in all the previous changes, hoping that this time we won’t yell at them because of an optimistic concurrency conflict.

As we get larger entities with more fields on them, we also get more actors working with those same entities, and the higher the likelihood that something will touch some attribute of them at any given time, increasing the number of concurrency conflicts.

If only there was some way for our users to provide us with the right level of granularity and intent when modifying data. That’s what commands are all about.

Commands

A core element of CQRS is rethinking the design of the user interface to enable us to capture our users’ intent such that making a customer preferred is a different unit of work for the user than indicating that the customer has moved or that they’ve gotten married. Using an Excel-like UI for data changes doesn’t capture intent, as we saw above.

We could even consider allowing our users to submit a new command even before they’ve received confirmation on the previous one. We could have a little widget on the side showing the user their pending commands, checking them off asynchronously as we receive confirmation from the server, or marking them with an X if they fail. The user could then double-click that failed task to find information about what happened.

Note that the client sends commands to the server – it doesn’t publish them. Publishing is reserved for events which state a fact – that something has happened, and that the publisher has no concern about what receivers of that event do with it.

Commands and Validation

In thinking through what could make a command fail, one topic that comes up is validation. Validation is different from business rules in that it states a context-independent fact about a command. Either a command is valid, or it isn’t. Business rules on the other hand are context dependent.

In the example we saw before, the data our customer service rep submitted was valid, it was only due to the billing event arriving earlier which required the command to be rejected. Had that billing event not arrived, the data would have been accepted.

Even though a command may be valid, there still may be reasons to reject it.

As such, validation can be performed on the client, checking that all fields required for that command are there, number and date ranges are OK, that kind of thing. The server would still validate all commands that arrive, not trusting clients to do the validation.

Rethinking UIs and commands in light of validation

The client can make of the query data store when validating commands. For example, before submitting a command that the customer has moved, we can check that the street name exists in the query data store.

At that point, we may rethink the UI and have an auto-completing text box for the street name, thus ensuring that the street name we’ll pass in the command will be valid. But why not take things a step further? Why not pass in the street ID instead of its name? Have the command represent the street not as a string, but as an ID (int, guid, whatever).

On the server side, the only reason that such a command would fail would be due to concurrency – that someone had deleted that street and that that hadn’t been reflected in the query store yet; a fairly exceptional set of circumstances.

Reasons valid commands fail and what to do about it

So we’ve got a well-behaved client that is sending valid commands, yet the server still decides to reject them. Often the circumstances for the rejection are related to other actors changing state relevant to the processing of that command.

In the CRM example above, it is only because the billing event arrived first. But “first” could be a millisecond before our command. What if our user pressed the button a millisecond earlier? Should that actually change the business outcome? Shouldn’t we expect our system to behave the same when observed from the outside?

So, if the billing event arrived second, shouldn’t that revert preferred customers to regular ones? Not only that, but shouldn’t the customer be notified of this, like by sending them an email? In which case, why not have this be the behavior for the case where the billing event arrives first? And if we’ve already got a notification model set up, do we really need to return an error to the customer service rep? I mean, it’s not like they can do anything about it other than notifying the customer.

So, if we’re not returning errors to the client (who is already sending us valid commands), maybe all we need to do on the client when sending a command is to tell the user “thank you, you will receive confirmation via email shortly”. We don’t even need the UI widget showing pending commands.

Commands and Autonomy

What we see is that in this model, commands don’t need to be processed immediately – they can be queued. How fast they get processed is a question of Service-Level Agreement (SLA) and not architecturally significant. This is one of the things that makes that node that processes commands autonomous from a runtime perspective – we don’t require an always-on connection to the client.

Also, we shouldn’t need to access the query store to process commands – any state that is needed should be managed by the autonomous component – that’s part of the meaning of autonomy.

Another part is the issue of failed message processing due to the database being down or hitting a deadlock. There is no reason that such errors should be returned to the client – we can just rollback and try again. When an administrator brings the database back up, all the message waiting in the queue will then be processed successfully and our users receive confirmation.

The system as a whole is quite a bit more robust to any error conditions.

Also, since we don’t have queries going through this database any more, the database itself is able to keep more rows/pages in memory which serve commands, improving performance. When both commands and queries were being served off of the same tables, the database server was always juggling rows between the two.

Autonomous Components

While in the picture above we see all commands going to the same AC, we could logically have each command processed by a different AC, each with it’s own queue. That would give us visibility into which queue was the longest, letting us see very easily which part of the system was the bottleneck. While this is interesting for developers, it is critical for system administrators.

Since commands wait in queues, we can now add more processing nodes behind those queues (using the distributor with NServiceBus) so that we’re only scaling the part of the system that’s slow. No need to waste servers on any other requests.

Service Layers

Our command processing objects in the various autonomous components actually make up our service layer. The reason you don’t see this layer explicitly represented in CQRS is that it isn’t really there, at least not as an identifiable logical collection of related objects – here’s why:

In the layered architecture (AKA 3-Tier) approach, there is no statement about dependencies between objects within a layer, or rather it is implied to be allowed. However, when taking a command-oriented view on the service layer, what we see are objects handling different types of commands. Each command is independent of the other, so why should we allow the objects which handle them to depend on each other?

Dependencies are things which should be avoided, unless there is good reason for them.

Keeping the command handling objects independent of each other will allow us to more easily version our system, one command at a time, not needing even to bring down the entire system, given that the new version is backwards compatible with the previous one.

Therefore, keep each command handler in its own VS project, or possibly even in its own solution, thus guiding developers away from introducing dependencies in the name of reuse (it’s a fallacy). If you do decide as a deployment concern, that you want to put them all in the same process feeding off of the same queue, you can ILMerge those assemblies and host them together, but understand that you will be undoing much of the benefits of your autonomous components.

Whither the domain model?

Although in the diagram above you can see the domain model beside the command-processing autonomous components, it’s actually an implementation detail. There is nothing that states that all commands must be processed by the same domain model. Arguably, you could have some commands be processed by transaction script, others using table module (AKA active record), as well as those using the domain model. Event-sourcing is another possible implementation.

Another thing to understand about the domain model is that it now isn’t used to serve queries. So the question is, why do you need to have so many relationships between entities in your domain model?

(You may want to take a second to let that sink in.)

Do we really need a collection of orders on the customer entity? In what command would we need to navigate that collection? In fact, what kind of command would need any one-to-many relationship? And if that’s the case for one-to-many, many-to-many would definitely be out as well. I mean, most commands only contain one or two IDs in them anyway.

Any aggregate operations that may have been calculated by looping over child entities could be pre-calculated and stored as properties on the parent entity. Following this process across all the entities in our domain would result in isolated entities needing nothing more than a couple of properties for the IDs of their related entities – “children” holding the parent ID, like in databases.

In this form, commands could be entirely processed by a single entity – viola, an aggregate root that is a consistency boundary.

Persistence for command processing

Given that the database used for command processing is not used for querying, and that most (if not all) commands contain the IDs of the rows they’re going to affect, do we really need to have a column for every single domain object property? What if we just serialized the domain entity and put it into a single column, and had another column containing the ID? This sounds quite similar to key-value storage that is available in the various cloud providers. In which case, would you really need an object-relational mapper to persist to this kind of storage?

You could also pull out an additional property per piece of data where you’d want the “database” to enforce uniqueness.

I’m not suggesting that you do this in all cases – rather just trying to get you to rethink some basic assumptions.

Let me reiterate

How you process the commands is an implementation detail of CQRS.

Keeping the query store in sync

After the command-processing autonomous component has decided to accept a command, modifying its persistent store as needed, it publishes an event notifying the world about it. This event often is the “past tense” of the command submitted:

MakeCustomerPerferredCommand -> CustomerHasBeenMadePerferredEvent

The publishing of the event is done transactionally together with the processing of the command and the changes to its database. That way, any kind of failure on commit will result in the event not being sent. This is something that should be handled by default by your message bus, and if you’re using MSMQ as your underlying transport, requires the use of transactional queues.

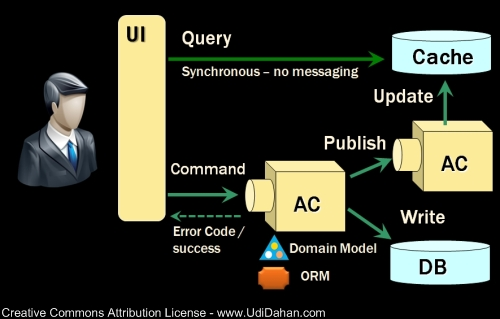

The autonomous component which processes those events and updates the query data store is fairly simple, translating from the event structure to the persistent view model structure. I suggest having an event handler per view model class (AKA per table).

Here’s the picture of all the pieces again:

Bounded Contexts

While CQRS touches on many pieces of software architecture, it is still not at the top of the food chain. CQRS if used is employed within a bounded context (DDD) or a business component (SOA) – a cohesive piece of the problem domain. The events published by one BC are subscribed to by other BCs, each updating their query and command data stores as needed.

UI’s from the CQRS found in each BC can be “mashed up” in a single application, providing users a single composite view on all parts of the problem domain. Composite UI frameworks are very useful for these cases.

Summary

CQRS is about coming up with an appropriate architecture for multi-user collaborative applications. It explicitly takes into account factors like data staleness and volatility and exploits those characteristics for creating simpler and more scalable constructs.

One cannot truly enjoy the benefits of CQRS without considering the user-interface, making it capture user intent explicitly. When taking into account client-side validation, command structures may be somewhat adjusted. Thinking through the order in which commands and events are processed can lead to notification patterns which make returning errors unnecessary.

While the result of applying CQRS to a given project is a more maintainable and performant code base, this simplicity and scalability require understanding the detailed business requirements and are not the result of any technical “best practice”. If anything, we can see a plethora of approaches to apparently similar problems being used together – data readers and domain models, one-way messaging and synchronous calls.

Although this blog post is over 3000 words (a record for this blog), I know that it doesn’t go into enough depth on the topic (it takes about 3 days out of the 5 of my Advanced Distributed Systems Design course to cover everything in enough depth). Still, I hope it has given you the understanding of why CQRS is the way it is and possibly opened your eyes to other ways of looking at the design of distributed systems.

Questions and comments are most welcome.

|

If you liked this article, you might also like articles in these categories: Architecture | Autonomous Services | Business Rules | CQRS | Messaging | Pub/Sub | Scalability | Validation

If you've got a minute, you might enjoy taking a look at some of my best articles.

If you've got a minute, you might enjoy taking a look at some of my best articles.I've gone through the hundreds of articles I've written over the past 6 years and put together a list of the best ones as ranked by my 5000+ readers. You won't be disappointed.  If you'd like to get new articles sent to you when they're published, it's easy and free. If you'd like to get new articles sent to you when they're published, it's easy and free.Subscribe right here.  Follow me on Twitter @UdiDahan. Follow me on Twitter @UdiDahan.

Something on your mind? Got a question? I'd be thrilled to hear it. Leave a comment below or email me, whatever works for you. 139 CommentsYour comment... |

September 21st, 2010 at 1:23 pm

Maninder,

I’d say that if the data does not need to be operated on by multiple actors collaboratively, then you might not need this approach. Highly complex non-collaborative domains may need it as well.

September 23rd, 2010 at 1:57 pm

Udi,

It seems like my last comment was not published. So let me retry hoping it is an idempotent operation 🙂

With my limited understanding, i see CQRS as a mechanism to scale read and writes.

Can you please explain how multiple actors collaboratively or highly complex domain can benefit from it GIVEN the constraint “The reads and writes in that application do not need to scale independently”.

September 23rd, 2010 at 2:38 pm

Maninder,

The technical form of using one-way commands enables scaling writes, and serving reads off of a type of cache has alway sbeen a way to scale queries. All that being said, if users don’t need to collaborate, each one is working on their own data, then you have an easily partitioned domain by user, rather than by separating reads and writes.

March 2nd, 2011 at 8:06 am

Hi Udi

Thanks a lot for this post, which indeed clarifies the CQRS topic.

You said earlier you would post on Event Sourcing soon, but I didn’t find any related post (apart from the current one). Am I missing something ?

It would be nice to have such a clear explanation on event sourcing, so.

Anyway, thanks a lot 🙂

March 4th, 2011 at 1:15 am

Thanks for the nudge Joseph 🙂

March 24th, 2011 at 4:00 am

When wil you pubish a book already 🙂 ? I *think my frustration with CQRS and possibly a lot of posts here is that there really isn’t a bible for CQRS so to speak esspecially in relation to DDD+CQRS.

Please oh please work on a book that will save me a lot of time and headache trying to google cqrs 🙂

March 28th, 2011 at 8:37 am

DavidZ,

If only I had the time 🙂

March 29th, 2011 at 2:35 pm

Hi Udi

Another question came to my mind: would you recommend CQRS only for application when performance/scalability is of the uttermost importance or more as a pattern applicable to any application, like some intranet one?

Having watched some of your talks on infoq I kind of had the feeling CQRS so much reducing the domain to its core that it would end up providing value anywhere, but most likely I’m just being over enthusiastic…

thanks again

best

March 29th, 2011 at 3:05 pm

Joseph,

I wouldn’t say that performance/scalability are the deciding factors towards choosing CQRS – but they do contribute. The primary property of a system that would make it a good fit for CQRS is whether it is collaborative – ergo multiple users/actors working in parallel on a shared set of data.

It is likely that there are parts of a system that are collaborative, and parts that aren’t. As such, CQRS should only be used for the collaborative parts.

March 30th, 2011 at 2:04 am

Thanks again for this clarification udi, really helpful as usual.

Mind if someday I translate this article in french and link back to your post ?

best

joseph

March 30th, 2011 at 2:10 am

Joseph,

That would be great – thanks.

April 14th, 2011 at 8:23 pm

hi, udi, I’m very interested in your diagram about CQRS.

And would you like to let me know what tool to draw this diagram?

Thanks in advanced.

April 15th, 2011 at 2:49 am

Brouce,

I use powerpoint.

May 3rd, 2011 at 9:04 am

Great Post Udi.

June 18th, 2012 at 9:30 am

Hi Udi.

I have a couple of design questions about applying CQS in non-distributed envirnoment (possible integrations). Ill be very thankfull if You’ll share some experience with me.

1. Obviously query is synchronous call, but… should we return data in form of DTO or just DataTable or DataSet. Some articles says that DataTable is enough but I have really horrible experience with it (easy to break something by changing columns names, and when project is changed very often -> hell) DTOs gives cenrtalized mappings.

Ive came to something like query objects (repositories doesnt provide anything in age of ‘big’ orms).

interface IQueryPerformer

{

TResult Perform(IQuery query);

}

IntelliSense/Resharper is helping a lot when we type for example:

List documents = QP.Perform(new …);

2.By using this approach Ive moved into another issue. Are queries part of service(gets data contained by service) or queries are universal and all lives inside one place?

Whats Your way to deal with querying data? Somekind of best practice for medium size projects (non high available)

3.Commands, sent over somekind of bus or command processor. What kind of validations are performed before sending command? Only client side validations(filled field ect)? Or also domain validations(some specific business rules)?

I would like to perform domain validations inside a handler, but then – how to return results from command?

For example:

WebForms/MVC (doesn’t matter)

Under click handler we are performing some basic validations of fields. If command is valid to send, we are sending.

Then some commandHandler handles command and starts business rules validation, what we should do if something goes wrong and command cant be processed? Throw exception? But what if we must just return specific result of processing, like ID of new created contract, product, ect?

We can’t assume that command will success, unless will pull out business rules validations from inside of domain. (and Im not 100% sure about that)

Kind Regards

Michal Samociuk

June 25th, 2012 at 6:38 am

“What if we just serialised the domain entity and put it into a single

column, and had another column containing the ID? This sounds quite similar to key-value storage that

is available in the various cloud providers. In which case, would you really need an object-relational

mapper to persist to this kind of storage?

You could also pull out an additional property per piece of data where you’d want the “database” to

enforce uniqueness.

I’m not suggesting”

I don’t agree with some of the assumptions you have here, but this one I think is just so plain wrong. So should we drop almost everything that was built in terms of software development and replace it with this nonsense? Since when did data referential integrity become a second level citizen in the system?! I thought that data was way too important. Hell, almost of the software running in the world does just the opposite, implementing proper referential integrity. Also in relation to this?

“Another thing to understand about the domain model is that it now isn’t used to serve queries. So the

question is, why do you need to have so many relationships between entities in your domain model?”

I would love to see such a system. So the system has to process commands and do some validations… where is the data for the validations going to be read from?

You do have some good ideas in here, but there are a few, that with all respect, are just nonsense.

October 28th, 2012 at 2:56 pm

“So, if we’re not returning errors to the client (who is already sending us valid commands), maybe all we need to do on the client when sending a command is to tell the user “thank you, you will receive confirmation via email shortly”. We don’t even need the UI widget showing pending commands.”

This sentence tells us above CQRS That is only for (about) 5% of applications build upon customer needs.

At least my experience in building applications for clients indicates those 5%.

October 30th, 2012 at 1:57 am

dgi,

Yes, this UI facing application form of CQRS isn’t applicable everywhere – I’ve said as much many times. This is mostly focused on collaborative environments.

November 14th, 2012 at 3:25 pm

Hello.

First of all, thanks for a great article.

I have a question – if my system has, let’s say, 60% writes and 40% reads and changes displayed on the screen need to be up to date every time, does it mean that CQRS doesn’t suit me?

November 14th, 2012 at 3:31 pm

Also, I must admit that after reading Evans’ book on DDD I got even more confused than before I read it 🙂 Many people say it’s a great book, and I’m not saying it’s not, but I think I understand about 25% of it if not less.

Can you recommend some other book on DDD, Jimmy Nilsson’s may be?

November 18th, 2012 at 5:22 am

Gleb,

You may be better served with simple CRUD (no extra layers) for some parts of your system, with CQRS coming in to play for more background processes.

November 20th, 2012 at 3:29 pm

I see. What about the book? Any suggestions? May be I should re-read the Evans book?

November 22nd, 2012 at 7:30 am

There’s a new book coming out from Vaughn Vernon called Implementing DDD that will probably help you out quite a bit:

http://www.amazon.com/Implementing-Domain-Driven-Design-Vaughn-Vernon/dp/0321834577

November 22nd, 2012 at 11:54 am

Thanks! Will definitely follow book’s reviews

December 11th, 2013 at 12:48 am

Ive been reading in many places about the use of queues between the web and worker to increase scalability. Im facing a challenge to move an existing system (over 10 years old code) to the Cloud and this pattern appears to be one of the first to adopt for Cloud performance/cost reasons. My question is one you have already faced several times in this blog (but I am not convinced), and i bet its the most common one you face – How does the (asynchronously initiated) worker report information on broken business rules back to the user ? The most common answer in the info ive come across is “by email”. While this works for Amazon, it wont work for the majority of business applications – the application should show them … somehow. Have you come across agreeable mechanisms for this – would you ever build the “Display Widget” you cast out in the blog or do you consider it wrong to do so ?

December 21st, 2013 at 12:18 pm

Simon,

You can use technologies like SignalR to push notifications back to the browser which initiated the action.

Cheers,

Udi

July 18th, 2015 at 4:33 pm

Hello,

Interesting post.

However it’s not clear when the query model and database are used, and when the “command” model and database are used.

Is the query model/database purely used for more reporting-like use cases, or is it used for all queries ?

E.g. if a user has the intent to delete a particular product, then typically the user is shown a page with a list of products (= query) first. From which he then can pick the product he wants to delete (= command).

Is the query database also used for these kind of use cases ?

July 18th, 2015 at 4:37 pm

With regards to

“Also, we shouldn’t need to access the query store to process commands – any state that is needed should be managed by the autonomous component – that’s part of the meaning of autonomy”

Is that a feasible assumption ?

How do you see this working, given the fact that command validation will (?) most probably always require fetching some information from the query store (e.g. street name) ?

Or is the assumption that for server-side command validation no data is fetched from the query store ? If that’s the assumption then where does the data come from ?

July 23rd, 2015 at 5:38 pm

EDH,

When it comes to “private data” – things that a user is working on by themselves before it is accessible to other users/processes, you would often be better served by applying simple CRUD techniques rather than CQRS.

When you’re in a more collaborative/high-contention domain, that when you need to start applying a CQRS approach. In practice, that means when processing a command that requires history, that that command processor will have held on to previous commands/events that caused that history, and use them instead of fetching information from the query store.

September 9th, 2015 at 8:18 am

Hi Udi, a great article; still coming to terms with it. One doubt that was lingering when I first read the article was the challenge in keeping the data stores in sync. Whenever an event is published, the query store needs to pull “updated” information from the master db. In a system where there could be tons of commands that needs to be processed, wouldn’t the pulls become a bottleneck, choking the master db? Am I missing something here?

September 21st, 2015 at 1:34 pm

Hi Sai,

Usually the event being published would include everything needed for updated the query store such that the master db wouldn’t need to be pulled from.

December 30th, 2015 at 5:40 am

Hello,

I am implementing CQRS with DDD without event sourcing. So I put commands and their handlers in the Application Layer instead of Domain layer. I do validation for required fields in the command handler and if all are valid, I interact with the domain from the handler like this:

public void Handle(ConfirmOrder command)

{

// validation here…

var order = repository.Get(command.OrderId);

order.Confirm();

repository.Save(order, command.Id.ToString());

}

Is it ok to put the commands and their handlers outside the domain in the Application Layer?

Thanks.

January 1st, 2016 at 3:19 pm

Nazac,

Yes, that’s fine.

April 8th, 2016 at 2:00 pm

Two things, in question after your statements on simple one-or-two object commands.

1:

> most commands only contain one or two IDs in them anyway.

I’ve seen a lot of call for “fat” interfaces for the web, argued either for latency sake, or for atomicity, or both. I might be willing to throw some of that out, but I want to drill into it first.

If you’re trying to enable a minimum-steps interface for a user (you used some form of e-commerce as an example in this article), you would potentially submit a lot of information at once – name, address, credit card, and cart id, all at the same time. These, in typical old-school apps with less inline validation, might all be validated upon submission.

I suppose it could at least be partially broken down to client-side validation first. Even upon client side validation, though, it is probably still useful to do validation at the submission level, as the “create an order with this cart sent to me” is a somewhat atomic action. Do you think submission should be broken up on the command side, even if it is not broken up on the UI side? If so, how might you break it up? I see this typically used in a “guest” use case.

Another example for this question might be the comment submission box I’m typing into right now. There’s 4 fields, and although I am not creating a user account, some commenting systems *would* do this, creating both a comment *and* a user. Same question – break that up into two commands, or leave it as one?

2:

> In fact, what kind of command would need any one-to-many relationship? And if that’s the case for one-to-many, many-to-many would definitely be out as well.

Still sort of re fat interfaces, but maybe it’s about necessarily list-oriented tasks…

Concurrently editing a list of things to modify their relative order seems like a case to me. Like sorting a list of pictures in an album, or re-ordering a Netflix queue or song playlist (before arguing that this wouldn’t happen concurrently, maybe it is shared with a trusted group and changed collaboratively? “Curation” is a big buzzword on the wide-open net these days, or at least was a couple years back…). Would you suggest modeling it in such a way that each modification would send an individual command, or would you batch it somehow?

And admittedly less inherently inter-dependent, what about batching? For a simple case, deleting a set of items you’ve checked off in a trello board (shared set of digital note cards). I can see the individual deletions aren’t dependent on each other, but for “fat interface” sake, could they be one batched command? Should they, or should it be broken down into individual removals, and if so, what about partial failures, for example on a shoddy internet connection. Wouldn’t a user expect it to be atomic if the interface appears atomic?

If you were to try to model batches, would you suggest a “batch cart” style, or would you be okay with and/or recommend list-oriented commands?

This of course doesn’t effect the data model, where you’re suggesting that children point to parent rather that vice-versa, but it certain makes command validation more challenging.

III:

One last question that’s less directly related – Would you suggest doing CQRS for real-time inter-dependent collaboration (ala google docs) or something unique, since latency and immediately being informed of other actor’s updates are a concern?

I am thinking CQRS might not be so great for such a model, although admittedly tools like this are rare, and maybe not terribly important from an absolute perspective, though I see more and more hot-editing stuff coming out these last few years (Firebase, online code editors, etc), so maybe we’ll hit a true killer app on this use case soon.

—

Re my examples: I know I might be trying to over-apply CQRS, though to me the SRP benefits of the generic form of the pattern might still fit into a “monolithic” module and deployment design, and still be fairly painless to code. To me, at an abstract level, it’s just SRP, except with the understanding that read/writes are quite different, and thus maybe you should simplify/split your states as well. I will probably blog about it some day if I end up having success applying it “in the small” 😉 3 layers are already “a lot” to me, yet still seen by me and plenty others to be valuable. See a rails apps for “3 tiers is simple”. To me this pattern just says that there are actually partitions within your layers… (ignoring stuff like Event Sourcing).

May 8th, 2016 at 2:11 pm

Merlyn,

See some of my posts on composite UIs to see how each “logical service” would be collecting its own parts of the data submitted.

To see how those can be physically recomposed for the performance needs of remote calls over HTTP, see this post:

http://udidahan.com/2014/07/30/service-oriented-composition-with-video/

When it comes to real-time collaborative domains (like Google Docs), there is so much more significant UX analysis to do that it’s not really possible to make generic statements about the applicability of any technical approach.

Hope that helps.

December 1st, 2016 at 6:57 am

So when you implement a microservice (quoting, for example), you have the command side and the query side. What are those in reality? Is it a single process like a web api? Or are the command query sides separate executables like a windows service for command and web api for query?

December 16th, 2016 at 8:14 pm

Jeremy,

I’d say that CQRS is a higher-level architectural pattern than microservices, so you probably wouldn’t be looking to apply CQRS *to* a microservice.

July 9th, 2017 at 3:32 pm

Hi Udi,

Your article is a few years old but was a great read for someone like me who is starting to study these kinds of architecture. As stated in another comment, it is great that your focus is on CQRS only without mixing things up with event sourcing and other concepts often associated with CQRS in other articles.

My question focus on the transaction that write to the master and publish the event. The master and the event queue are most probably be different (for example a relational database and another AC as in your schema or a message queue). How can you ensure that both writes have succeeded and revert any change if one did not ? You have mecanism like 2PC but they often have some serious drawbacks.

Do you have any recommendation on how to approach this issue ?

I think that’s why I often read about articles explaining CQRS in event sourcing architecture. Solutions like Kafka seem to handle this issue as it provides in a single all-in-one system the event log and the master (built from the event log history).

Thanks.

I am going to look into your “best of the best” article too.

September 3rd, 2017 at 11:29 am

Hi Jonathan,

This is exactly what the Outbox pattern we’ve implemented in NServiceBus does:

https://docs.particular.net/nservicebus/outbox/