|

|

Archive for the ‘Scalability’ Category

Sunday, February 22nd, 2009

There’s been some recent discussion as to the “cost” of messaging:

Greg Young asserts:

“I believe that this shows there to be a rather negligible cost associated with the use of such a model. There is however a small cost, this cost however I believe only exists when one looks at the system in isolation.”

Ayende adds his perspective:

“The cost of messaging, and a very real one, comes when you need to understand the system. In a system where message exchange is the form of communication, it can be significantly harder to understand what is going on.”

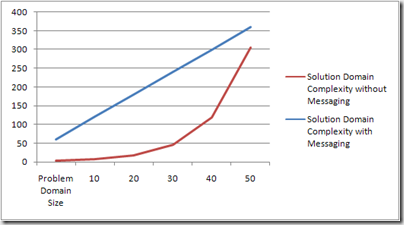

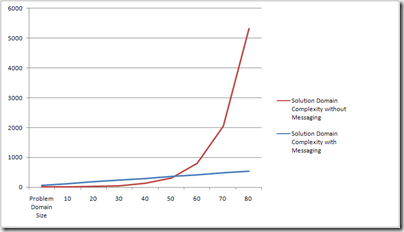

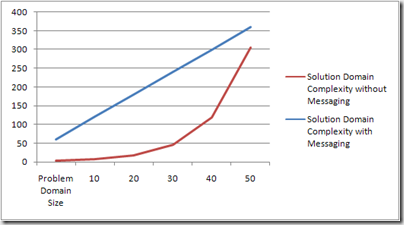

Of course, both these intelligent fellows are right. The reason for the apparent disparity in viewpoints has to do with which part of the following graph you look at. Ayende zooms in on the left side:

As systems get larger, though, the only way to understand them is by working at higher levels of abstraction. That’s where messaging really shines, as the incremental complexity remains the same by maintaining the same modularity as before:

In Ayende’s post, he follows the design I described a while back on using messaging for user management and login for a high-scale web scenario. In his comments, he agrees with the above stating:

“I certainly think that a similar solution using RPC would be much more complex and likely more brittle.”

I feel quite conservative in saying the most enterprise solutions fall on the right side of the intersection in the graph.

That being said, don’t underestimate the learning curve developers go through with messaging. While the mechanics are similar, the mindset is very different. Think about it like this:

You’ve driven a car for years in the US. It’s practically second nature. Then you fly to the UK, rent a car, and all of a sudden, your brain is in meltdown. (or vice versa for those going from the UK to the US)

Summary

If you are going down the messaging route, please be aware that there are shades of gray there as well. You don’t have to implement your user management and login the way I outlined in my post if you don’t require such high levels of scalability, but even lower levels of scalability can benefit from messaging.

Just as there isn’t a single correct design for non-messaging solutions, the same is true for those using messaging. Finding the right balance is tricky, and critical.

When the code is simple in every part of the system, and the asynchronous interactions are what provide for the necessary complexity the problem domain requires, that’s when you know you’ve got it just right.

Posted in Architecture, EDA, Messaging, Pub/Sub, Scalability, SOA | 3 Comments »

Monday, December 29th, 2008

I’ve been consulting with a client who has a wildly successful web-based system, with well over 10 million users and looking at a tenfold growth in the near future. One of the recent features in their system was to show users their local weather and it almost maxed out their capacity. That raised certain warning flags as to the ability of their current architecture to scale to the levels that the business was taking them.

On Web 2.0 Mashups

One would think that sites like Weather.com and friends would be the first choice for implementing such a feature. Only thing is that they were strongly against being mashed-up Web 2.0 style on the client – they had enough scalability problems of their own. Interestingly enough (or not), these partners were quite happy to publish their weather data to us and let us handle the whole scalability issue.

Implementation 1.0

The current implementation was fairly straightforward – client issues a regular web service request to the GetWeather webmethod, the server uses the user’s IP address to find out their location, then use that location to find the weather for that location in the database, and return that to the user. Standard fare for most dynamic data and the way most everybody would tell you to do it.

Only thing is that it scales like a dog.

Add Some Caching

The first thing you do when you have scalability problems and the database is the bottleneck is to cache, well, that’s what everybody says (same everybody as above).

The thing is that holding all the weather of the entire globe in memory, well, takes a lot of memory. More than is reasonable. In which case, there’s a fairly decent chance that a given request can’t be served from the cache, resulting in a query to the database, an update to the cache, which bumps out something else, in short, not a very good hit rate.

Not much bang for the buck.

If you have a single datacenter, having a caching tier that stores this data is possible, but costly. If you want a highly available, business continuity supportable, multi-datacenter infrastructure, the costs add up quite a bit quicker – to the point of not being cost effective (“You need HOW much money for weather?! We’ve got dozens more features like that in the pipe!”)

What we can do is to tell the client we’re responding to that they can cache the result, but that isn’t close to being enough for us to scale.

Look at the Data, Leverage the Internet

When you find yourself in this sort of situation, there’s really only one thing to do:

In order to save on bandwidth, the most precious commodity of the internet, the various ISPs and backbone providers cache aggressively. In fact, HTTP is designed exactly for that.

If user A asks for some html page, the various intermediaries between his browser and the server hosting that page will cache that page (based on HTTP headers). When user B asks for that same page, and their request goes through one of the intermediaries that user A’s request went through, that intermediary will serve back its cached copy of the page rather than calling the hosting server.

Also, users located in the same geographic region by and large go through the same intermediaries when calling a remote site.

Leverage the Internet

The internet is the biggest, most scalable data serving infrastructure that mankind was lucky enough to have happen to it. However, in order to leverage it – you need to understand your data and how your users use it, and finally align yourself with the way the internet works.

Let’s say we have 1,000 users in London. All of them are going to have the same weather. If all these users come to our site in the period of a few hours and ask for the weather, they all are going to get the exact same data. The thing is that the response semantics of the GetWeather webmethod must prevent intermediaries from caching so that users in Dublin and Glasgow don’t get London weather (although at times I bet they’d like to).

REST Helps You Leverage the Internet

Rather than thinking of getting the weather as an operation/webmethod, we can represent the various locations weather data as explicit web resources, each with its own URI. Thus, the weather in London would be http://weather.myclient.com/UK/London.

If we were able to make our clients in London perform an HTTP GET on http://weather.myclient.com/UK/London then we could return headers in the HTTP response telling the intermediaries that they can cache the response for an hour, or however long we want.

That way, after the first user in London gets the weather from our servers, all the other 999 users will be getting the same data served to them from one of the intermediaries. Instead of getting hammered by millions of requests a day, the internet would shoulder easily 90% of that load making it much easier to scale. Thanks Al.

This isn’t a “cheap trick”. While being straight forward for something like weather, understanding the nature of your data and intelligently mapping that to a URI space is critical to building a scalable system, and reaping the benefits of REST.

What’s left?

The only thing that’s left is to get the client to know which URI to call. A simple matter, really.

When the user logs in, we perform the IP to location lookup and then write a cookie to the client with their location (UK/London). That cookie then stays with the user saving us from having to perform that IP to location lookup all the time. On subsequent logins, if the cookie is already there, we don’t do the lookup.

BTW, we also show the user “you’re in London, aren’t you?” with the link allowing the user to change their location, which we then update the cookie with and change the URI we get the weather from.

In Closing

While web services are great for getting a system up and running quickly and interoperably, scalability often suffers. Not so much as to be in your face, but after you’ve gone quite a ways and invested a fair amount of development in it, you find it standing between you and the scalability you seek.

Moving to REST is not about turning on the “make it restful” switch in your technology stack (ASP.NET MVC and WCF, I’m talking to you). Just like with databases there is no “make it go fast” switch – you really do need to understand your data, the various users access patterns, and the volatility of the data so that you can map it to the “right” resources and URIs.

If you do walk the RESTful path, you’ll find that the scalability that was once so distant is now within your grasp.

Posted in Architecture, Caching, Performance, REST, Scalability, Web Services | 30 Comments »

Monday, December 15th, 2008

From Integrated Simplicity:

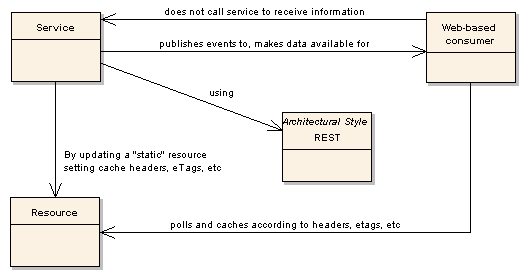

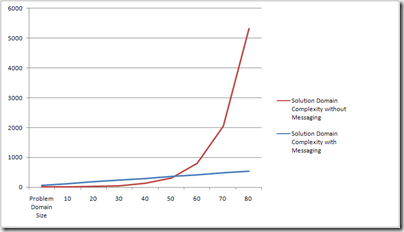

The question of how web-based (or 3rd party) consumers can work with pub/sub based services comes up a lot.

Many developers are used to implementing web services exposing methods on them like GetAllCustomers.

When moving to pub/sub and other more loosely coupled messaging patterns, developers look to implement the same pattern, opting for something like duplex GetCustomersRequest and GetCustomersResponse. The reasoning is simple and straightforward – it is difficult to push data over the web to consumers.

However, there are still ways to disconnect the preparation of the data from its usage thus gaining many of the advantages of pub/sub.

By employing REST principles and modelling our customer list as an explicit resource, web-based consumers would simply perform regular HTTP GET operations on the URI to get the list of customers.

The resource itself could be a simple XML file – it wouldn’t need to be dynamic at all.

You can get all the scalability benefits of pub/sub for web based consumers. All you need is a bit of REST 🙂

Posted in Architecture, EDA, Integrated Simplicity, Pub/Sub, REST, Scalability, SOA | 4 Comments »

Saturday, November 15th, 2008

The great people at IASA have made the recording for my webcast available online:

The slides can be found here.

I also gave this talk at TechEd Barcelona and wanted to thank the attendee who posted this comment:

“You’ve done it again. Everytime I attend a session of yours I leave the room with new insights and inspiration on how to improve my software…”

You made my day.

Posted in Architecture, Availability, Presentations, Reliability, Scalability | 4 Comments »

Wednesday, August 13th, 2008

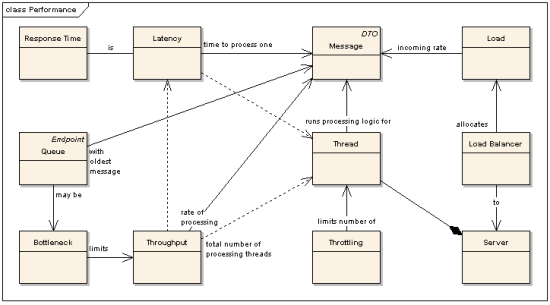

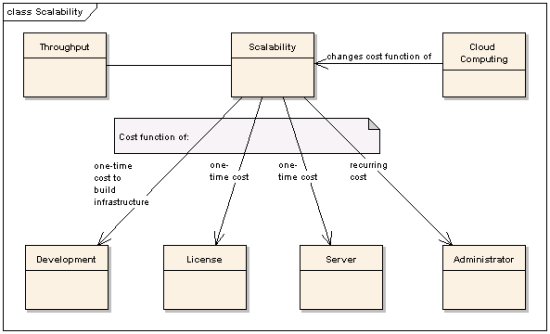

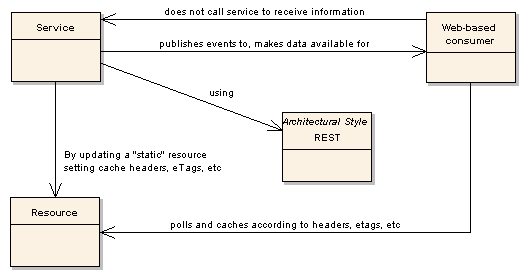

To the question of scale Ayende brings up, I thought I’d tap my concept map.

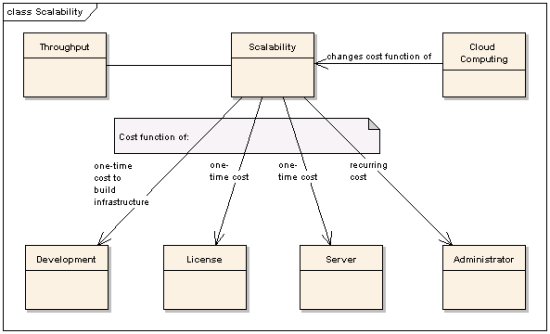

First of all, I wanted to address the relationship between various topics related to scalability:

And on the connection between scalability and throughput:

The important message here is that the scalability of a system is a cost function that gives throughput as a function of recurring costs and one time costs – servers and other hardware, and the join of buy & build:

Did you write your own locking/transaction mechanism on top of an open source distributed cache or did you buy a license for a space-based technology?

Also, don’t forget that people need to administer all the servers that you have. Those people cost money (easily100K per year). Maybe, because you haven’t invested in management or monitoring tools you need one person for every two servers. This will influence the breakdown of up front costs and recurring costs. Also, the level of availability you require will impact this as well.

In my experience, architects don’t consider often enough the operations environment in their "scalability calculations".

What this means is that there’s no such thing as technically "not being able to scale".

Rather, that the cost (up front + recurring) of supporting higher throughput grows faster than the function of revenue per user/request/whatever.

Sometimes, the solution is just to find ways to make more money per customer.

For more technical solutions, take a look at the difference between capacity and scalability and how the competing consumer pattern helps scale out.

Scalability, it’s all about the money.

—

Oh, I almost forgot, I also had a great conversation with Carl and Richard about scaling web sites that’s now up on the .NET Rocks site. Enjoy.

Posted in Architecture, Availability, Performance, Scalability | No Comments »

Wednesday, July 30th, 2008

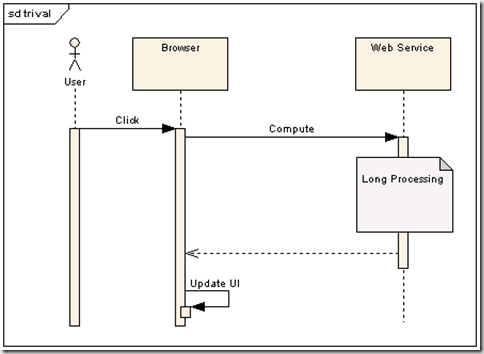

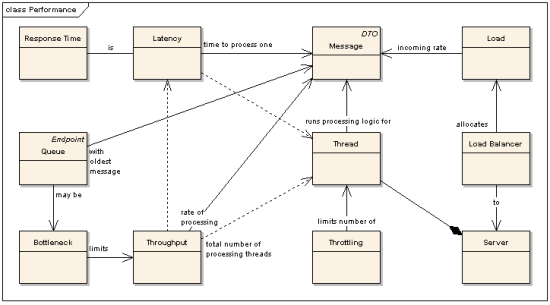

While I was at TechEd USA I had an attendee, Will, come up and ask me an interesting question about how to handle web service calls that can take a long time to complete. He has a number of these kinds of requests ranging from computationally intensive tasks to those requiring sifting through large amounts of data. What Will was having problems with was preventing too many of these resource-intensive tasks from running concurrently (causing increased memory usage, paging, and eventually the server becoming unavailable).

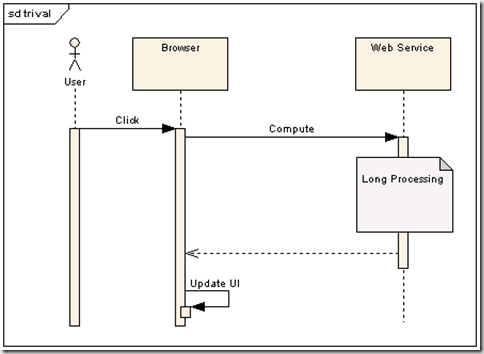

For comparison later, here’s a diagram showing the trivial interaction:

One solution that he’d tried was to set up the web server to throttle those requests and keep a much smaller maximum thread-pool size for that application pool. The unfortunate side effect of that solution was that clients would get “turned away” by a not-so-pleasant Connection Refused exception.

Will had been to my web scalability talk and was curious about how I was using queues behind my web services. I’ve also heard this question from people just getting started with nServiceBus when looking at the Web Services Bridge sample. Here’s the code that’s in the sample and in just a second I’ll tell you why you shouldn’t do this:

[WebMethod]

public ErrorCodes Process(Command request)

{

object result = ErrorCodes.None;

IAsyncResult sync = Global.Bus.Send(request).Register(

delegate(IAsyncResult asyncResult)

{

CompletionResult completionResult = asyncResult.AsyncState as CompletionResult;

if (completionResult != null)

{

result = (ErrorCodes) completionResult.ErrorCode;

}

},

null

);

sync.AsyncWaitHandle.WaitOne();

return (ErrorCodes)result;

}

Let me repeat, this is demo-ware. Do not use this in production.

What’s happening is that in this web service call we’re putting a message in a queue for some other process/machine to process. When that processing is complete, we’ll get a message back in our local queue (which you don’t see) which is correlated to our original request, firing off the callback. We block the web method from completing (using the WaitOne call) thus keeping the HTTP connection to the client open.

The problem here is that we’re wasting resources (the HTTP connection and the thread) while waiting for a response which, as already mentioned, can take a long time. In B2B or other server to server integration environments there are all sorts of middleware solutions that help us solve these problems, however in Will’s case browsers needed to interact with this web service. All he had was HTTP.

HTTP Solutions

Another attendee who was listening in (sorry I don’t remember your name) said that he was solving similar problems using polling but that he was having scalability problems as well.

What often surprises my clients when we deal with these same issues is that I do suggest a polling based solution, but one that still uses messaging, and this is what I described to Will:

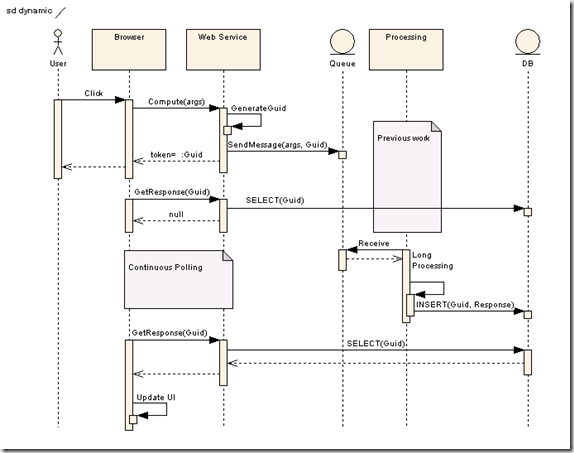

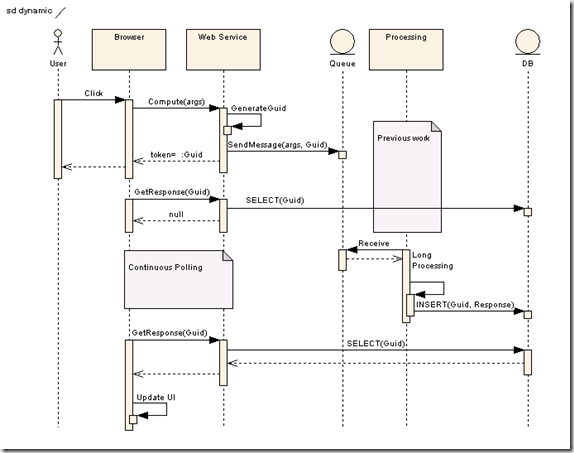

Since we can’t actually push a message to a browser over HTTP from our server when processing is complete, the browser itself will be responsible for pulling the response. We still don’t want to leave costly resources like HTTP connections open a long time, however if the browser is going to polling for a response, we’ll need some way to correlate those following requests with the original one. What we’re going to do is use the Asynchronous Completion Token pattern, and later I’ll show how to optimize it for web server technology.

Basic Polling

When the browser calls the web service, the web service will generate a Guid, put it in the message that it sends for processing, and return that guid to the browser. When the processing of the message is complete, the result will be written to some kind of database, indexed by that guid. The browser will periodically call another web method, passing in the guid it previously received as a parameter. That web method will check the database for a response using the guid, returning null if no response is there. If the browser receives a null response, it will “sleep” a bit and then retry.

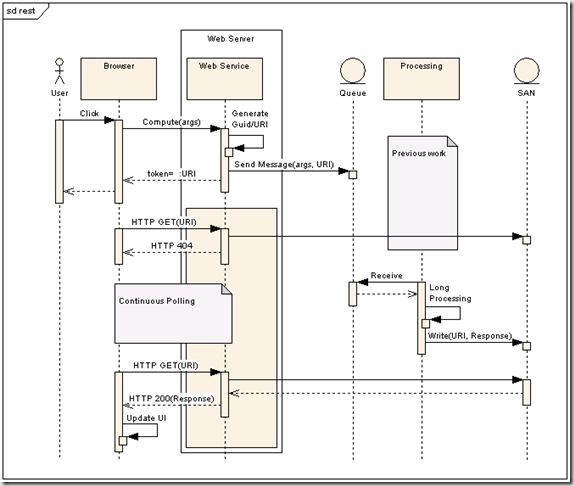

One of the problems with this solution is that polling uses up server resources – both on the web server and our DB; threads, memory, DB connections. A better solution would decrease the resource cost of the polling. Let’s use the fundamental building blocks of the web to our advantage – HTTP GET and resources:

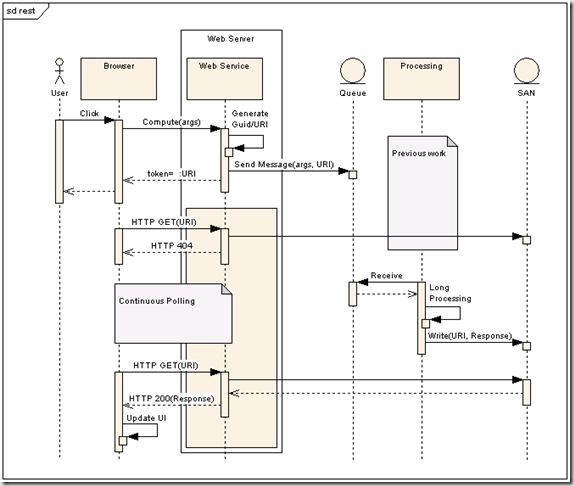

REST-full Polling

Instead of using a guid to represent the id of the response, let’s consider the REST principle of “everything’s a resource”. That would mean that the response itself would be a resource. And since every resource has a URI, we might as well use that URI in lieu of the guid. So, instead of our web service returning a guid, let’s return a URI – something like:

http://www.acme.com/responses/88ec5359-a5d8-4491-a570-3bfe469f3a64.xml

As you can see, the guid is still there. So, what’s different?

What’s different is that instead of having the processing code write the response to the database, it writes it to a resource. This can be done by writing some XML to a file on the SAN in the case of a webfarm. Also, the browser wouldn’t need to call a web service to get the response, it would just do an HTTP GET on the URI. If the it gets an HTTP 404, it would sleep and retry as before. The reason that the SAN is needed is that, as the browser polls, it may have its requests arrive at various web servers so the response needs to be accessible from any one of them.

Just as an aside, it would be better to free the processing node as quickly as possible and have something else write the response to the SAN. That would be done simply by sending a message from the processing node that would be handled by a different node that all it did was write responses to disk.

The reason that the URI makes a difference is that serving “static” resources is something that web servers do extremely efficiently without requiring any managed resources (like ASP.NET threads). That’s a big deal.

We’re still using HTTP connections for the polling but that’s something whose effect can be mitigated to a certain degree.

Timed REST-full Polling

Since various requests can take varying amounts of time to process, it’s difficult to know at what rate the browser should poll. So, why don’t we have the web service tell it. As a part of the response to the original web service call, instead of just returning a URI, we could also return the polling interval – 1 second, 5 seconds, whatever is appropriate for the type of request. This value could easily be configurable [RequestType, PollingInterval].

An even more advanced solution would allow you to change these values dynamically. The advantage that would be gained would be that your operations team could better manage the load on your servers. When a large number of users are hitting your system, you could decrease the rate at which your servers would be polled, thus leaving more HTTP connections for other users.

Scaling and Adaptive Polling

You’d probably also want to scale out the number of processing nodes behind your queue. The nice thing is that you could change the polling interval as you scale the various processing nodes per request type providing better responsiveness for the more critical requests. Once we add virtualization, things get really fun:

We had separate queues per request type, so that we could easily see the load we were under for each type of request. That way, we could scale out the processing nodes per request type as well as change the polling interval. By virtualizing our processing nodes, and writing scripts to monitor queue sizes, we had those scripts automatically provisioning (and de-provisioning) nodes as well as changing the polling interval of the browsers.

This had the enormous benefit of the system automatically shifting resources to provide the appropriate relative allocation for the current load as its macroscopic make-up changed.

Summary

Will was well-pleased with the solution which, although more complicated than what he had originally tried, was flexible enough to meet his needs. As opposed to pure server-based solutions, here we make more use of the browser (writing our own Javascript) instead of putting our faith in some Ajax-y library. That’s not to say that you couldn’t wrap this up into a library – in essence, it is a kind of messaging transport for browser to server communication allowing duplex conversations.

In fact, what could be done is to return multiple responses to the browser over a long period of time. In the response that comes back to the browser could be an additional URI where the next response will be. This can be used for reporting the status of a long running process, paging results, and in many other scenarios.

And, one parting thought, could this not be used for all browser to web service communication?

Posted in Architecture, Messaging, Scalability, Web Services | 23 Comments »

Thursday, July 17th, 2008

I’ve received some great feedback on my MSDN article and some really great questions that I think more people are wondering about, so I think I’ll try to do a post per question and see how that goes.

Libor asks:

“Would you recommend using durable messaging for systems where there are similar requirements with respect to data reliability as you had – ie. not losing any messages? If so, then why didn’t the final version of your solution use it? If not, can you explain why?”

The answer is, as always, it depends, but here’s on what it depends:

When designing a system, we need to take a good, hard look at how we manage state, and what properties that state has. In a system of reasonable size we can expect various families of state with respect to their business value, data volatility, and fault-tolerance window. Each family needs to be treated differently. While durable messaging may be suitable for one, it may be overkill or underkill for another.

So, here’s what we’re going to be looking at:

- Business Value

- Data Volatility

- Fault-Tolerance Window

Business Value

When talking about business value, I want to talk about what it means “not losing any messages”. The question is under what conditions will the messages not be lost, or rather, what are the threshold conditions where messages may start getting lost. If all our datacenters are nuked, we will lose data. It’s likely the business is OK with that (as much as can be expected under those circumstances). If a single server goes down, it’s likely the business would not be OK with losing messages containing financial data. However if a message requesting the health of a server were to get lost under those same conditions, that would probably be alright. In other words, what does that message represent in business terms.

Data Volatility

Data volatility also has an impact. Let’s say that we’re building a financial trading system. The time that it takes us to respond to an event (message) that the cost of a certain financial instrument has changed, and the message that we send requesting to buy that security is critical. Let’s say that has to be done in under 10ms. Now, some failure has occurred preventing our message from reaching its destination for 20ms. What should we do with that message? Should we keep it around, making sure it doesn’t get lost? Not in this domain. On the contrary, that message should be thrown away as its “business lifetime” has been exceeded. Furthermore, even during that original period of 10ms, the use of durable messaging may make it close to impossible to maintain our response times. Data volatility also has an impact. Let’s say that we’re building a financial trading system. The time that it takes us to respond to an event (message) that the cost of a certain financial instrument has changed, and the message that we send requesting to buy that security is critical. Let’s say that has to be done in under 10ms. Now, some failure has occurred preventing our message from reaching its destination for 20ms. What should we do with that message? Should we keep it around, making sure it doesn’t get lost? Not in this domain. On the contrary, that message should be thrown away as its “business lifetime” has been exceeded. Furthermore, even during that original period of 10ms, the use of durable messaging may make it close to impossible to maintain our response times.

Fault-Tolerance Window

These two topics feed into the third and more architectural one – fault-tolerance window: what period of time do we require fault tolerance, and with respect to how many (and what kind of) faults? This will lead us into an analysis of to how many machines do we need to copy a message before we release the calling thread. We’d also look at in which datacenters those machines reside. This will also impact (or be impacted by) the kinds of links we have to these datacenters if we want to maintain response times. These numbers will need to change when the system identifies a disaster – degrading itself to a lower level of fault-tolerance after a hurricane knocks out a datacenter, and returning to normal once it comes back up.

Re-Evaluating Durable Messaging

Durable messaging may be used at various points in each part of the solution, but we need to look at message size, the rate those messages are being written to disk, how fast the disk is, how much available disk we have (so we don’t make things worse in the case of degraded service), etc. Companies like Amazon also take into account disk failure rates, replacement rates (disks aren’t replaced immediately you know), and many other factors when making these decisions

Summary

Our job as architects when designing the system is to find that cost-benefit balance for the various parts of the system according to these very applicative parameters. No, it’s not easy. No, cloud computing will not magically solve all of this for us. But, we are getting more technical tools to work with, operations staff is getting better at working with us in the design phase, and our thought processes more rigorous in dealing with the scary conditions of the real world.

To your question, Libor, as to why we didn’t eventually use durable messaging in our solution, the answer is that we solved the overall state management problem by setting up an applicative protocol with our partners which was resilient in the face of faults by using idempotent messages that could be resent as many times as necessary. You can read more about it here. This solution isn’t viable for other kinds of interactions but was just what we needed to get the job done.

Hope that helps.

Posted in Architecture, Availability, Messaging, Performance, Reliability, Scalability | 4 Comments »

Monday, June 30th, 2008

This topic is getting more play as more people are using WCF and WF in real-world scenarios, so I thought I’d pull the things that I’ve been watching in this space together:

Reliability

Locking in SqlWorkflowPersistenceService (via Ron Jacobs) where, if you want predictable persistence (MS: ‘none of our customers asked for this to be easy’), you need to use a custom activity (which Ron was kind enough to supply).

“Given what I learned today I’d have to say that I’d be very careful about using workflows with an optimistic locking. Detecting these types of situations is not that simple.”

Let’s think about that. If we’re doing pessimistic locking, we get into the problem of, if a host restarts (as the result of a critical windows patch or some other unexpected occurrence), that the workflow won’t be able to be handled by any other host in the meantime (you didn’t care so much about your SLA, did you?).

Luckily, someone’s come up with a hack that works around this robustness problem in Scalable Workflow Persistence and Ownership.

“So this code will attempt to load workflow instances with expired locks every second. Is it a hack? Yes. But without one of two things in the SqlWorkflowPersistenceService its the sort of code you have to write to pick up unlocked workflow instances robustly.”

This will seriously churn the table used to store your workflows, decreasing performance of workflows that haven’t timed out. Oh well.

Testability

Implementing WCF Services without Referencing WCF (via Mark Seemann):

“More than a year ago, I wrote my first post on unit testing WCF services. One of my points back then was that you have to be careful that the service implementation doesn’t use any of the services provided by the WCF runtime environment (if you want to keep the service testable). As soon as you invoke something like OperationContext.Current, your code is not going to work in a unit testing scenario, but only when hosted by WCF.”

After pointing out some of the more basic difficulties in testability a straightforward WCF implementation brings, Mark turns the heat up in his follow-up post, Modifying Behavior of WCF-Free Service Implementations:

“Perhaps you need to control the service’s ConcurrencyMode, or perhaps you need to set UseSynchronizationContext. These options are typically controlled by the ServiceBehaviorAttribute. You may also want to provide an IInstanceProvider via a custom attribute that implements IContractBehavior. However, you can’t set these attributes on the service implementation itself, since it mustn’t have a reference to System.ServiceModel.”

Wow – all the things required to make a WCF service scalable and thread-safe make it difficult to test. In the end, we’re beginning to see how many hoops we have to go through in order to get separation of concerns, but until we can take all this and get it out of our application code, it’s an untenable solution. I hope Mark will continue with this series, if only so I can take the framework that might grow out of it and use it as a generic WCF transport for NServiceBus.

Comparison

After the Neuron-NServiceBus comparison that Sam and I had, we talked some more. After going through some of the rational and thinking, Sam even put nServiceBus into his WCF-Neuron comparison talk. Sam had this to say about nServiceBus:

“The bottom line is: I like what I see. Although it’s a framework, not an ESB product like Neuron, it’s a powerful framework that takes the right approach on SOA and enforces a paradigm of reliable one-way, *non-blocking* calls. That is the point of the talk tonight overall; we need to get away from the stack world of synchronous RPC calls to true asynchronous non-blocking message based SOA systems.”

The main concern I have with a WCF+WF based solution is that developers need to know a lot in order to make it testable, scalable, and robust. In nServiceBus, that’s baked into the design. It would be extremely difficult for a developer writing application logic to interfere with when persistence needs to happen, or the concurrency strategy of long-running workflows. The fact that message handlers in the service layer don’t need concurrency modes, instance providers, or any of that junk make them testable by default.

Posted in NServiceBus, Reliability, Scalability, Testing, WCF, Workflow | No Comments »

Wednesday, June 25th, 2008

For reporting, that is.

And doesn’t handle concurrency!

Unless you don’t expose setters.

I guess it depends, doesn’t it?

Well, that was Ted’s assertion in his recent Pragmatic Architecture column on data access.

But, “it depends” doesn’t get the system built, does it?

So, here are some rules for using o/r mapping that will get you 99% of the way there.

Yes, you heard me.

Rules.

They do not depend.

If you’re doing something significantly bigger than enterprise-scale development, and you are already doing this, and it isn’t enough, give me a call. Here we go.

- No reporting.

I mean it. Don’t report off of live data.

This isn’t just a o/r mapping thing.

Users can tolerate some, if not quite a lot of latency.

And it’s not like objects are even used. It’s just rolled up data. Not a single behaviour for miles.

- Don’t expose setters

You want multiple users sharing and collaborating on data, right? Then don’t force them to either overwrite each others data, or throw away their own. There is one simple way to avoid that: Get an object, call a method. Once the object has the most up to date data, pass all the client data in via a method call. The object will decide if its valid, from a business perspective as well, and then update the appropriate fields.

Now your DBAs can vertically partition tables accordingly, and improve throughput. After that, you can increase the isolation level, to improve safety, without hurting throughput.

This will also keep your logic encapsulated, bringing you closer to a true Domain Model.

If your O/R mapping tool requires you to have setters on your domain classes, hide those from your service layer behind an interface.

- Grids are like reports.

No o/r mapping required there either. While you probably won’t be showing grids of yesterday’s data to users in an interactive environment, it’s still just data – no behaviour.

However, users should NOT update data in those grids. This gets back to rule 2. Have users select a specific task they want to perform, pop open a window, and have them do it there. Change customer address. Discount order. You get the picture. That way you’ll know what method to call on those objects you designed in rule 2.

Before wrapping up, one small thing.

You can use an O/R mapping tool to do reporting, just, for the love of Bill, don’t use the same classes you designed for your OLTP domain model. But, just because you can, doesn’t necessarily mean you should. Datasets datatables are probably just as viable a solution.

Posted in Architecture, Business Rules, Data Access, Scalability | 23 Comments »

Monday, June 23rd, 2008

It turns out that there was a subtle, yet dangerous problem in the use of System.Transactions – a transaction could timeout, rollback, and the connection bound to that transaction could still change data in the database.

Think about that a second.

Scary, isn’t it?

At TechEd Israel I had a discussion with Manu on this very issue, just under a different hat:

What’s the difference between a short-running workflow and a long-running one?

Manu suggested that we look at the actual time that things ran to differentiate between them. I asserted that if any external communication was involved in some part of state-management logic, that logic should automatically be treated as long-running.

Manu’s reasoning was that the complexity involved in writing long-running workflows was not justified for things that ran quickly, even if there was communication involved. Many developers don’t think twice about synchronously calling some web services in the middle of their database transaction logic. In the many Microsoft presentations I’ve been at on WF, not once has it been mentioned that state machines should be used when external communication is involved.

The problem that I have with this guidance is how do you know how quickly a remote call will return?

Do you just run it all locally on your machine, measure, and if it doesn’t take more than a second or so, then you’re OK?

The fact of the matter is that we can never know what the response time of a remote call will be. Maybe the remote machine is down. Maybe the remote process is down. Maybe someone changed the firewall settings and now we’re doing 10KB/s instead of 10MB/s. Maybe the local service is down and we’re communicating with the backup on the other side of the Pacific Ocean.

But the thing is, Manu’s right.

Writing long-running workflows (with WF) is more complex than is justified. My guess is that since WF wasn’t specifically designed for long-running workflows only, that this complexity crept in.

Sagas in nServiceBus were specifically designed for long-running workflows only.

Maybe that’s what kept them simple.

Since all external communication is done via one-way, non-blocking messaging only, each step of a saga runs as quick as if no communication were done at all. This keeps the time the transaction in charge of handling a message is open as short as possible. That, in turn, leads to the database being able to support more concurrent users.

In short, sagas are both more scalable and more robust.

No need to worry about garbaging-up your database.

Posted in Databases, NServiceBus, Scalability, Workflow | 2 Comments »

|

|

|

Recommendations

Bryan Wheeler, Director Platform Development at msnbc.com

“ Udi Dahan is the real deal.We brought him on site to give our development staff the 5-day “Advanced Distributed System Design” training. The course profoundly changed our understanding and approach to SOA and distributed systems. Consider some of the evidence: 1. Months later, developers still make allusions to concepts learned in the course nearly every day 2. One of our developers went home and made her husband (a developer at another company) sign up for the course at a subsequent date/venue 3. Based on what we learned, we’ve made constant improvements to our architecture that have helped us to adapt to our ever changing business domain at scale and speed If you have the opportunity to receive the training, you will make a substantial paradigm shift. If I were to do the whole thing over again, I’d start the week by playing the clip from the Matrix where Morpheus offers Neo the choice between the red and blue pills. Once you make the intellectual leap, you’ll never look at distributed systems the same way. Beyond the training, we were able to spend some time with Udi discussing issues unique to our business domain. Because Udi is a rare combination of a big picture thinker and a low level doer, he can quickly hone in on various issues and quickly make good (if not startling) recommendations to help solve tough technical issues.” November 11, 2010

Sam Gentile, Independent WCF & SOA Expert

Sam Gentile, Independent WCF & SOA Expert

“Udi, one of the great minds in this area. A man I respect immensely.”

Ian Robinson, Principal Consultant at ThoughtWorks

Ian Robinson, Principal Consultant at ThoughtWorks

"Your blog and articles have been enormously useful in shaping, testing and refining my own approach to delivering on SOA initiatives over the last few years. Over and against a certain 3-layer-application-architecture-blown-out-to- distributed-proportions school of SOA, your writing, steers a far more valuable course."

Shy Cohen, Senior Program Manager at Microsoft

Shy Cohen, Senior Program Manager at Microsoft

“Udi is a world renowned software architect and speaker. I met Udi at a conference that we were both speaking at, and immediately recognized his keen insight and razor-sharp intellect. Our shared passion for SOA and the advancement of its practice launched a discussion that lasted into the small hours of the night. It was evident through that discussion that Udi is one of the most knowledgeable people in the SOA space. It was also clear why – Udi does not settle for mediocrity, and seeks to fully understand (or define) the logic and principles behind things. Humble yet uncompromising, Udi is a pleasure to interact with.”

Glenn Block, Senior Program Manager - WCF at Microsoft

Glenn Block, Senior Program Manager - WCF at Microsoft

“I have known Udi for many years having attended his workshops and having several personal interactions including working with him when we were building our Composite Application Guidance in patterns & practices. What impresses me about Udi is his deep insight into how to address business problems through sound architecture. Backed by many years of building mission critical real world distributed systems it is no wonder that Udi is the best at what he does. When customers have deep issues with their system design, I point them Udi's way.”

Karl Wannenmacher, Senior Lead Expert at Frequentis AG

Karl Wannenmacher, Senior Lead Expert at Frequentis AG

“I have been following Udi’s blog and podcasts since 2007. I’m convinced that he is one of the most knowledgeable and experienced people in the field of SOA, EDA and large scale systems.

Udi helped Frequentis to design a major subsystem of a large mission critical system with a nationwide deployment based on NServiceBus. It was impressive to see how he took the initial architecture and turned it upside down leading to a very flexible and scalable yet simple system without knowing the details of the business domain.

I highly recommend consulting with Udi when it comes to large scale mission critical systems in any domain.”

Simon Segal, Independent Consultant

Simon Segal, Independent Consultant

“Udi is one of the outstanding software development minds in the world today, his vast insights into Service Oriented Architectures and Smart Clients in particular are indeed a rare commodity. Udi is also an exceptional teacher and can help lead teams to fall into the pit of success. I would recommend Udi to anyone considering some Architecural guidance and support in their next project.”

Ohad Israeli, Chief Architect at Hewlett-Packard, Indigo Division

Ohad Israeli, Chief Architect at Hewlett-Packard, Indigo Division

“When you need a man to do the job Udi is your man! No matter if you are facing near deadline deadlock or at the early stages of your development, if you have a problem Udi is the one who will probably be able to solve it, with his large experience at the industry and his widely horizons of thinking , he is always full of just in place great architectural ideas.

I am honored to have Udi as a colleague and a friend (plus having his cell phone on my speed dial).”

Ward Bell, VP Product Development at IdeaBlade

Ward Bell, VP Product Development at IdeaBlade

“Everyone will tell you how smart and knowledgable Udi is ... and they are oh-so-right. Let me add that Udi is a smart LISTENER. He's always calibrating what he has to offer with your needs and your experience ... looking for the fit. He has strongly held views ... and the ability to temper them with the nuances of the situation. I trust Udi to tell me what I need to hear, even if I don't want to hear it, ... in a way that I can hear it. That's a rare skill to go along with his command and intelligence.”

Eli Brin, Program Manager at RISCO Group

“We hired Udi as a SOA specialist for a large scale project. The development is outsourced to India. SOA is a buzzword used almost for anything today. We wanted to understand what SOA really is, and what is the meaning and practice to develop a SOA based system.

We identified Udi as the one that can put some sense and order in our minds. We started with a private customized SOA training for the entire team in Israel. After that I had several focused sessions regarding our architecture and design.

I will summarize it simply (as he is the software simplist): We are very happy to have Udi in our project. It has a great benefit. We feel good and assured with the knowledge and practice he brings. He doesn’t talk over our heads. We assimilated nServicebus as the ESB of the project. I highly recommend you to bring Udi into your project.”

Catherine Hole, Senior Project Manager at the Norwegian Health Network

Catherine Hole, Senior Project Manager at the Norwegian Health Network

“My colleagues and I have spent five interesting days with Udi - diving into the many aspects of SOA. Udi has shown impressive abilities of understanding organizational challenges, and has brought the business perspective into our way of looking at services. He has an excellent understanding of the many layers from business at the top to the technical infrstructure at the bottom. He is a great listener, and manages to simplify challenges in a way that is understandable both for developers and CEOs, and all the specialists in between.”

Yoel Arnon, MSMQ Expert

Yoel Arnon, MSMQ Expert

“Udi has a unique, in depth understanding of service oriented architecture and how it should be used in the real world, combined with excellent presentation skills. I think Udi should be a premier choice for a consultant or architect of distributed systems.”

Vadim Mesonzhnik, Development Project Lead at Polycom

“When we were faced with a task of creating a high performance server for a video-tele conferencing domain we decided to opt for a stateless cluster with SQL server approach. In order to confirm our decision we invited Udi.

After carefully listening for 2 hours he said: "With your kind of high availability and performance requirements you don’t want to go with stateless architecture."

One simple sentence saved us from implementing a wrong product and finding that out after years of development. No matter whether our former decisions were confirmed or altered, it gave us great confidence to move forward relying on the experience, industry best-practices and time-proven techniques that Udi shared with us.

It was a distinct pleasure and a unique opportunity to learn from someone who is among the best at what he does.”

Jack Van Hoof, Enterprise Integration Architect at Dutch Railways

Jack Van Hoof, Enterprise Integration Architect at Dutch Railways

“Udi is a respected visionary on SOA and EDA, whose opinion I most of the time (if not always) highly agree with. The nice thing about Udi is that he is able to explain architectural concepts in terms of practical code-level examples.”

Neil Robbins, Applications Architect at Brit Insurance

Neil Robbins, Applications Architect at Brit Insurance

“Having followed Udi's blog and other writings for a number of years I attended Udi's two day course on 'Loosely Coupled Messaging with NServiceBus' at SkillsMatter, London.

I would strongly recommend this course to anyone with an interest in how to develop IT systems which provide immediate and future fitness for purpose. An influential and innovative thought leader and practitioner in his field, Udi demonstrates and shares a phenomenally in depth knowledge that proves his position as one of the premier experts in his field globally.

The course has enhanced my knowledge and skills in ways that I am able to immediately apply to provide benefits to my employer. Additionally though I will be able to build upon what I learned in my 2 days with Udi and have no doubt that it will only enhance my future career.

I cannot recommend Udi, and his courses, highly enough.”

Nick Malik, Enterprise Architect at Microsoft Corporation

Nick Malik, Enterprise Architect at Microsoft Corporation

“ You are an excellent speaker and trainer, Udi, and I've had the fortunate experience of having attended one of your presentations. I believe that you are a knowledgable and intelligent man.”

Sean Farmar, Chief Technical Architect at Candidate Manager Ltd

Sean Farmar, Chief Technical Architect at Candidate Manager Ltd

“Udi has provided us with guidance in system architecture and supports our implementation of NServiceBus in our core business application.

He accompanied us in all stages of our development cycle and helped us put vision into real life distributed scalable software. He brought fresh thinking, great in depth of understanding software, and ongoing support that proved as valuable and cost effective.

Udi has the unique ability to analyze the business problem and come up with a simple and elegant solution for the code and the business alike. With Udi's attention to details, and knowledge we avoided pit falls that would cost us dearly.”

Børge Hansen, Architect Advisor at Microsoft

Børge Hansen, Architect Advisor at Microsoft

“Udi delivered a 5 hour long workshop on SOA for aspiring architects in Norway. While keeping everyone awake and excited Udi gave us some great insights and really delivered on making complex software challenges simple. Truly the software simplist.”

Motty Cohen, SW Manager at KorenTec Technologies

“I know Udi very well from our mutual work at KorenTec. During the analysis and design of a complex, distributed C4I system - where the basic concepts of NServiceBus start to emerge - I gained a lot of "Udi's hours" so I can surely say that he is a professional, skilled architect with fresh ideas and unique perspective for solving complex architecture challenges. His ideas, concepts and parts of the artifacts are the basis of several state-of-the-art C4I systems that I was involved in their architecture design.”

Aaron Jensen, VP of Engineering at Eleutian Technology

Aaron Jensen, VP of Engineering at Eleutian Technology

“ Awesome. Just awesome.

We’d been meaning to delve into messaging at Eleutian after multiple discussions with and blog posts from Greg Young and Udi Dahan in the past. We weren’t entirely sure where to start, how to start, what tools to use, how to use them, etc. Being able to sit in a room with Udi for an entire week while he described exactly how, why and what he does to tackle a massive enterprise system was invaluable to say the least.

We now have a much better direction and, more importantly, have the confidence we need to start introducing these powerful concepts into production at Eleutian.”

Gad Rosenthal, Department Manager at Retalix

Gad Rosenthal, Department Manager at Retalix

“A thinking person. Brought fresh and valuable ideas that helped us in architecting our product. When recommending a solution he supports it with evidence and detail so you can successfully act based on it. Udi's support "comes on all levels" - As the solution architect through to the detailed class design. Trustworthy!”

Chris Bilson, Developer at Russell Investment Group

Chris Bilson, Developer at Russell Investment Group

“I had the pleasure of attending a workshop Udi led at the Seattle ALT.NET conference in February 2009. I have been reading Udi's articles and listening to his podcasts for a long time and have always looked to him as a source of advice on software architecture. When I actually met him and talked to him I was even more impressed. Not only is Udi an extremely likable person, he's got that rare gift of being able to explain complex concepts and ideas in a way that is easy to understand. All the attendees of the workshop greatly appreciate the time he spent with us and the amazing insights into service oriented architecture he shared with us.”

Alexey Shestialtynov, Senior .Net Developer at Candidate Manager

Alexey Shestialtynov, Senior .Net Developer at Candidate Manager

“I met Udi at Candidate Manager where he was brought in part-time as a consultant to help the company make its flagship product more scalable. For me, even after 30 years in software development, working with Udi was a great learning experience. I simply love his fresh ideas and architecture insights. As we all know it is not enough to be armed with best tools and technologies to be successful in software - there is still human factor involved. When, as it happens, the project got in trouble, management asked Udi to step into a leadership role and bring it back on track. This he did in the span of a month. I can only wish that things had been done this way from the very beginning. I look forward to working with Udi again in the future.”

Christopher Bennage, President at Blue Spire Consulting, Inc.

Christopher Bennage, President at Blue Spire Consulting, Inc.

“My company was hired to be the primary development team for a large scale and highly distributed application. Since these are not necessarily everyday requirements, we wanted to bring in some additional expertise. We chose Udi because of his blogging, podcasting, and speaking. We asked him to to review our architectural strategy as well as the overall viability of project.

I was very impressed, as Udi demonstrated a broad understanding of the sorts of problems we would face. His advice was honest and unbiased and very pragmatic. Whenever I questioned him on particular points, he was able to backup his opinion with real life examples.

I was also impressed with his clarity and precision. He was very careful to untangle the meaning of words that might be overloaded or otherwise confusing. While Udi's hourly rate may not be the cheapest, the ROI is undoubtedly a deal.

I would highly recommend consulting with Udi.”

Robert Lewkovich, Product / Development Manager at Eggs Overnight

“Udi's advice and consulting were a huge time saver for the project I'm responsible for. The $ spent were well worth it and provided me with a more complete understanding of nServiceBus and most importantly in helping make the correct architectural decisions earlier thereby reducing later, and more expensive, rework.”

Ray Houston, Director of Development at TOPAZ Technologies

Ray Houston, Director of Development at TOPAZ Technologies

“Udi's SOA class made me smart - it was awesome.

The class was very well put together. The materials were clear and concise and Udi did a fantastic job presenting it. It was a good mixture of lecture, coding, and question and answer. I fully expected that I would be taking notes like crazy, but it was so well laid out that the only thing I wrote down the entire course was what I wanted for lunch. Udi provided us with all the lecture materials and everyone has access to all of the samples which are in the nServiceBus trunk.

Now I know why Udi is the "Software Simplist." I was amazed to find that all the code and solutions were indeed very simple. The patterns that Udi presented keep things simple by isolating complexity so that it doesn't creep into your day to day code. The domain code looks the same if it's running in a single process or if it's running in 100 processes.”

Ian Cooper, Team Lead at Beazley

Ian Cooper, Team Lead at Beazley

“Udi is one of the leaders in the .Net development community, one of the truly smart guys who do not just get best architectural practice well enough to educate others but drives innovation. Udi consistently challenges my thinking in ways that make me better at what I do.”

Liron Levy, Team Leader at Rafael

“I've met Udi when I worked as a team leader in Rafael. One of the most senior managers there knew Udi because he was doing superb architecture job in another Rafael project and he recommended bringing him on board to help the project I was leading. Udi brought with him fresh solutions and invaluable deep architecture insights. He is an authority on SOA (service oriented architecture) and this was a tremendous help in our project. On the personal level - Udi is a great communicator and can persuade even the most difficult audiences (I was part of such an audience myself..) by bringing sound explanations that draw on his extensive knowledge in the software business. Working with Udi was a great learning experience for me, and I'll be happy to work with him again in the future.”

Adam Dymitruk, Director of IT at Apara Systems

Adam Dymitruk, Director of IT at Apara Systems

“I met Udi for the first time at DevTeach in Montreal back in early 2007. While Udi is usually involved in SOA subjects, his knowledge spans all of a software development company's concerns. I would not hesitate to recommend Udi for any company that needs excellent leadership, mentoring, problem solving, application of patterns, implementation of methodologies and straight out solution development. There are very few people in the world that are as dedicated to their craft as Udi is to his. At ALT.NET Seattle, Udi explained many core ideas about SOA. The team that I brought with me found his workshop and other talks the highlight of the event and provided the most value to us and our organization. I am thrilled to have the opportunity to recommend him.”

Eytan Michaeli, CTO Korentec

Eytan Michaeli, CTO Korentec

“Udi was responsible for a major project in the company, and as a chief architect designed a complex multi server C4I system with many innovations and excellent performance.”

Carl Kenne, .Net Consultant at Dotway AB

Carl Kenne, .Net Consultant at Dotway AB

“Udi's session "DDD in Enterprise apps" was truly an eye opener. Udi has a great ability to explain complex enterprise designs in a very comprehensive and inspiring way. I've seen several sessions on both DDD and SOA in the past, but Udi puts it in a completly new perspective and makes us understand what it's all really about. If you ever have a chance to see any of Udi's sessions in the future, take it!”

Avi Nehama, R&D Project Manager at Retalix

“Not only that Udi is a briliant software architecture consultant, he also has remarkable abilities to present complex ideas in a simple and concise manner, and...

always with a smile. Udi is indeed a top-league professional!”

Ben Scheirman, Lead Developer at CenterPoint Energy

Ben Scheirman, Lead Developer at CenterPoint Energy

“Udi is one of those rare people who not only deeply understands SOA and domain driven design, but also eloquently conveys that in an easy to grasp way. He is patient, polite, and easy to talk to. I'm extremely glad I came to his workshop on SOA.”

Scott C. Reynolds, Director of Software Engineering at CBLPath

Scott C. Reynolds, Director of Software Engineering at CBLPath

“Udi is consistently advancing the state of thought in software architecture, service orientation, and domain modeling.

His mastery of the technologies and techniques is second to none, but he pairs that with a singular ability to listen and communicate effectively with all parties, technical and non, to help people arrive at context-appropriate solutions.

Every time I have worked with Udi, or attended a talk of his, or just had a conversation with him I have come away from it enriched with new understanding about the ideas discussed.”

Evgeny-Hen Osipow, Head of R&D at PCLine

“Udi has helped PCLine on projects by implementing architectural blueprints demonstrating the value of simple design and code.”

Rhys Campbell, Owner at Artemis West

Rhys Campbell, Owner at Artemis West

“For many years I have been following the works of Udi. His explanation of often complex design and architectural concepts are so cleanly broken down that even the most junior of architects can begin to understand these concepts. These concepts however tend to typify the "real world" problems we face daily so even the most experienced software expert will find himself in an "Aha!" moment when following Udi teachings.

It was a pleasure to finally meet Udi in Seattle Alt.Net OpenSpaces 2008, where I was pleasantly surprised at how down-to-earth and approachable he was. His depth and breadth of software knowledge also became apparent when discussion with his peers quickly dove deep in to the problems we current face. If given the opportunity to work with or recommend Udi I would quickly take that chance. When I think .Net Architecture, I think Udi.”

Sverre Hundeide, Senior Consultant at Objectware

Sverre Hundeide, Senior Consultant at Objectware

“Udi had been hired to present the third LEAP master class in Oslo. He is an well known international expert on enterprise software architecture and design, and is the author of the open source messaging framework nServiceBus.

The entire class was based on discussion and interaction with the audience, and the only Power Point slide used was the one showing the agenda.

He started out with sketching a naive traditional n-tier application (big ball of mud), and based on suggestions from the audience we explored different solutions which might improve the solution. Whatever suggestions we threw at him, he always had a thoroughly considered answer describing pros and cons with the suggested solution. He obviously has a lot of experience with real world enterprise SOA applications.”

Raphaël Wouters, Owner/Managing Partner at Medinternals

Raphaël Wouters, Owner/Managing Partner at Medinternals

“I attended Udi's excellent course 'Advanced Distributed System Design with SOA and DDD' at Skillsmatter. Few people can truly claim such a high skill and expertise level, present it using a pragmatic, concrete no-nonsense approach and still stay reachable.”

Nimrod Peleg, Lab Engineer at Technion IIT

Nimrod Peleg, Lab Engineer at Technion IIT

“One of the best programmers and software engineer I've ever met, creative, knows how to design and implemet, very collaborative and finally - the applications he designed implemeted work for many years without any problems!”

Jose Manuel Beas

“When I attended Udi's SOA Workshop, then it suddenly changed my view of what Service Oriented Architectures were all about. Udi explained complex concepts very clearly and created a very productive discussion environment where all the attendees could learn a lot. I strongly recommend hiring Udi.”

Daniel Jin, Senior Lead Developer at PJM Interconnection

Daniel Jin, Senior Lead Developer at PJM Interconnection

“Udi is one of the top SOA guru in the .NET space. He is always eager to help others by sharing his knowledge and experiences. His blog articles often offer deep insights and is a invaluable resource. I highly recommend him.”

Pasi Taive, Chief Architect at Tieto

Pasi Taive, Chief Architect at Tieto

“I attended both of Udi's "UI Composition Key to SOA Success" and "DDD in Enterprise Apps" sessions and they were exceptionally good. I will definitely participate in his sessions again. Udi is a great presenter and has the ability to explain complex issues in a manner that everyone understands.”

Eran Sagi, Software Architect at HP

“So far, I heard about Service Oriented architecture all over.

Everyone mentions it – the big buzz word.

But, when I actually asked someone for what does it really mean, no one managed to give me a complete satisfied answer.

Finally in his excellent course “Advanced Distributed Systems”, I got the answers I was looking for.

Udi went over the different motivations (principles) of Services Oriented, explained them well one by one, and showed how each one could be technically addressed using NService bus.

In his course, Udi also explain the way of thinking when coming to design a Service Oriented system.

What are the questions you need to ask yourself in order to shape your system, place the logic in the right places for best Service Oriented system.

I would recommend this course for any architect or developer who deals with distributed system, but not only.

In my work we do not have a real distributed system, but one PC which host both the UI application and the different services inside, all communicating via WCF.

I found that many of the architecture principles and motivations of SOA apply for our system as well. Enough that you have SW partitioned into components and most of the principles becomes relevant to you as well.

Bottom line – an excellent course recommended to any SW Architect, or any developer dealing with distributed system.”

Consult with Udi

Guest Authored Books

|

Data volatility also has an impact. Let’s say that we’re building a financial trading system. The time that it takes us to respond to an event (message) that the cost of a certain financial instrument has changed, and the message that we send requesting to buy that security is critical. Let’s say that has to be done in under 10ms. Now, some failure has occurred preventing our message from reaching its destination for 20ms. What should we do with that message? Should we keep it around, making sure it doesn’t get lost? Not in this domain. On the contrary, that message should be thrown away as its “business lifetime” has been exceeded. Furthermore, even during that original period of 10ms, the use of durable messaging may make it close to impossible to maintain our response times.

Data volatility also has an impact. Let’s say that we’re building a financial trading system. The time that it takes us to respond to an event (message) that the cost of a certain financial instrument has changed, and the message that we send requesting to buy that security is critical. Let’s say that has to be done in under 10ms. Now, some failure has occurred preventing our message from reaching its destination for 20ms. What should we do with that message? Should we keep it around, making sure it doesn’t get lost? Not in this domain. On the contrary, that message should be thrown away as its “business lifetime” has been exceeded. Furthermore, even during that original period of 10ms, the use of durable messaging may make it close to impossible to maintain our response times.